Okay, okay, I know, writing about ‘intelligence’ can be easily taken the wrong way. Because the term is so often used in supremacy dreams of certain people that will even praise eugenics just to push their ideology. But hear me out. I’m not trying to provide a classification of ‘they are stupid’ and ‘they are smart’. Instead my attempt is to find a definition that takes a step back, bringing in the reality perception of everyone.

What are features of ‘intelligence’?

Let’s start with first identifying what ‘intelligence’ could possibly be. Differentiating it from what is not. However, in the end we will probably not find a ‘complete’ definition but that’s fine, the goal isn’t perfect but good.

As nothing exists without relationship to something else this is also the case for ‘intelligence’. Everything affects each other all the time. They may don’t have a perceivable effect for us but still, everything is in connection with everything all the time. ‘Intelligence’ therefore describes something on a certain abstraction level.

‘Intelligence’ as a system

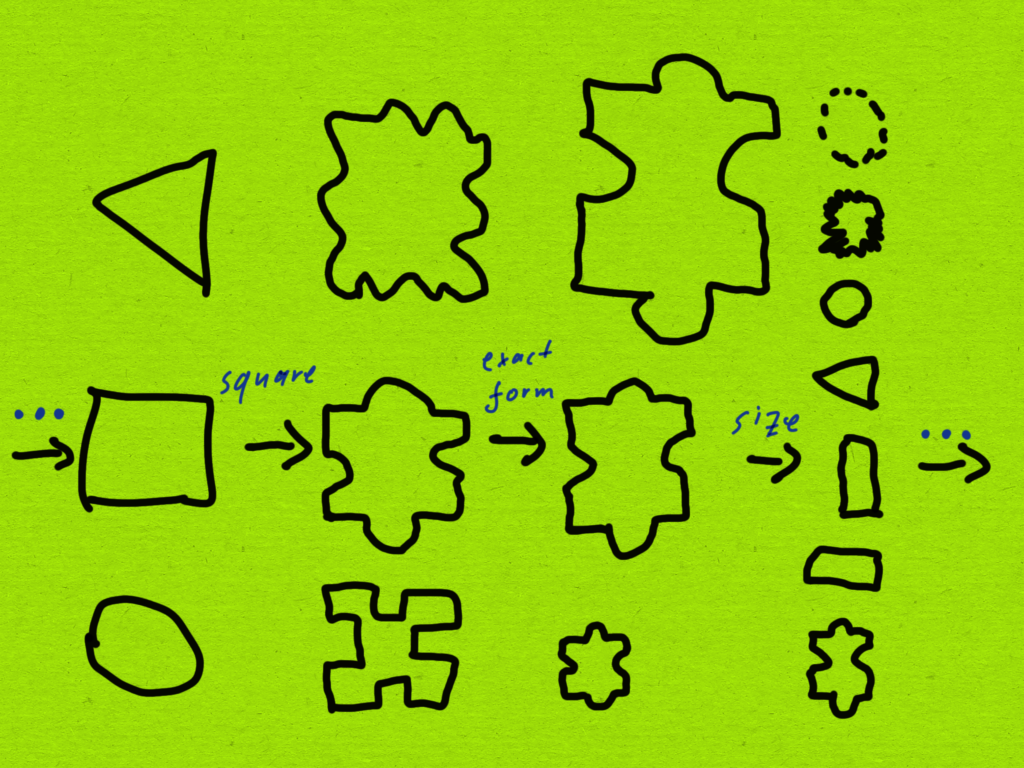

Intelligence requires the ability to perceive or be aware of something.

It requires the ability to process which can come in forms of pattern recognition, prediction, interpolation, generic adaption.

In the end, it also requires a way to self-express.

Dimensions

Did you notice that I never defined at any point what is perceived, processed and expressed? This is because ‘intelligence’ exists in different dimensions. What are these dimensions? They can be a lot of different things, a very much incomplete list would be: mathematical logic, audio with music as a sub-dimension, the own body, language, vision with painting as a sub-dimension, and so on.

Basically everything that can be perceived, in the human case what we can perceive through our senses, can be adapted to through our capability or potential for intelligence. Take language as an example. While language is completely an abstraction layer that contains symbols which reference to certain parts of our reality and can even describe representations of our social reality (i.e., things we have agreed on but isn’t actually part of physical reality), there are different languages that are spoken and communicated through in this world. You may be able to comprehend some of them but probably not all. My question to you is, does it make you non-intelligent if you don’t know a language? Does it make you intelligent if you can adapt to the different expressions of a language and reproduce the patterns within it in spoken and written form?

Language

Assuming you are able to learn another language, then you express a certain intelligence in the dimension of languages. If you are able to take up languages very fast, you have a great sensitivity towards perceiving it, great capability to process it, to recognise the patterns, predict and adapt to the form of language, and you are capable of expressing yourself in this language.

I know a lot of people that failed to learn languages at school in a way that they would get good grades but instead they learned it much better and faster through consuming language in daily life but also to express themselves in it in a daily form. Which maybe shows that the input that is provided through school learning is actually quite limited and restricted and therefore makes it much more difficult for some people to adapt and learn it.

The funny thing with language is that it constantly evolving, not only globally but also in local spaces. This means while you may be able to perceive, process and express the language, you may will struggle at first when you encounter variations of this language. You need to identify the patterns of how they use the language and words within it and adapt your understanding for it. Words will therefore have different meanings. The easiest way to figure this out is if you talk about with people in the West for example about terms like ‘capitalism’ and ‘communism’ and then even go one step deeper and talk about these terms with people from different political orientations. Their interpretations of these words will vastly differ.

And of course you may say ‘but some of these definitions are clearly wrong!!’ however, this doesn’t change the fact of how they are used and with what kind of understanding. Don’t forget that everybody is ignorant. In some areas more, in others maybe less, but the most problematic thing is that we are all not aware of our own ignorances until somebody pokes us and extends our world view. Providing new information that we may or may not adapt to.

In the end we shouldn’t forget that language is an abstraction layer that is used as a set of symbols that refer to things in physical or social reality and especially social realities also tend to change. Which shows in the end how much understanding of languages depends on its interpretation. Language therefore has no absolut meaning. Like I use to say ‘nothing is absolute, everything depends on context.’.

First Conclusion

Let me end here with saying that self-expression is of course also an important part. You may have writer and poets that are able to use writing as a way to express themselves but also think about musicians that have processes running to know how much to press the next piano key, how much pressure to put on the bow of their violin or how to push out the air through their mouth to make the trumpet produce exactly the sound they are looking for.

Be aware that every living thing is intelligent in a different way. Taking music as an example, some may are not even able to perceive whether a sound is a certain frequency of a note ‘c’ or of another note. Not everybody has an ‘absolute hearing’, right? The capability between everyone can differ a lot.

What I want you to try out is to go through your daily life and ask yourself whether something is intelligent, if yes, in what form? And what is the difference between things that are intelligent and what is preprogrammed and makes decision on predefined algorithms. Really, what is the difference here?

Artificial intelligence or so they say

What did trigger my reflection on the term ‘intelligence’ so strong? Well, it was the uprising topic of artificial intelligence. I was wondering what the different subcategories of AI are, how it works in detail, what media in all kind of forms like to let us know what it is and what people are projecting on to it because of its fancy name.

Basically, is artificial intelligence intelligent? If yes, how? If no, why not?

Machine learning

What machine learning is doing (which is basically the actual previous term of artificial intelligence) is to create an interface between machines and humans where a machine attempts to recognise patterns in a provided human communication method, e.g. spoken or written language but also by providing an visual input. Be aware that machine learning can be provided theoretically any kind of data. The question we want to ask ourselves is, what do we expect it to identify as patterns there and if it doesn’t find what we want is it because data is missing certain variables?

While machine learning was focused in the previous decade more on supervised learning, the things we see now associated with AI are more unsupervised learning. While in supervised learning you provide the machine information that certain things are labelled in a certain way, like a picture of a dog is labeled with ‘dog’ and a picture with a cat is labeled ‘cat’, the difference to unsupervised learning is that you provide a big set of pictures with dogs and cats and ask then for a new picture whether it is a cat or dog and the machine has learned certain attributes in the previous pictures and based on this information it can say whether it is a cat or dog.

However, be aware that the results can heavily be influenced by your data set that you provided. Like if your dogs pictures are all in nature and with a collar around their neck and cats are all at home on the couch, your machine may actually learned to detect collars and furniture. This is a trap many projects already have fallen into, like detecting certain types of tanks but actually detecting the snowy landscape or detecting skin cancer but actually detecting the ruler a doctor has put on the side of your skin.

The problem with machine learning, especially unsupervised learning is that we can’t really know what has hidden behind the numbers of a certain feature the machine has detected, is it detecting a dog, a collar or something else?

Large Language Models (LLMs)

I’ve taken a deeper look into Large Language Models (LLMs), the stuff you may know as chatGPT tool. Basically what they did they provided a very huge set of texts and let the machine identify patterns in it. Things that are appearing together therefore are set in the created data set closer together.

Imagine you have a two dimensional space, i.e., a graph with a x and y axis. The x-axis represents age of an object, while the y-axis represents objects, starting with living things and ending with stuff that is not alive. On the x-axis the further right we go the older something is. Example giving a house may becomes a ruin while a human starts at being a baby turning into a child, teenager, adult, old, dead. A dog may be close to humans and starts however as a puppy. This means a puppy and baby are much further together then a dead person and a ruin. Because puppy and baby both are living things, while a ruin is not living at any point and therefore will be high up on the y-axis.

Such kind of attributes are inherited through the data set that was provided. However, this also means that it contains the biases of the text data that is provided.

E.g., when you train your LLM only with data sets around technology and project management but not with text of issues in society, the LLM will only have information around the term PoC with the meaning of “Proof of Concept” but not the term PoC with the meaning of “People of Colour”.

However, for sure you can provide new data that makes a LLM adapt to this new information but the question is, how much data do you need to provide to have a significant impact on its responses? And will this require an adaptation of the whole model? Like how much of it needs to be changed or will it be only like an add-on?

Problems with AI models

There is something that almost everybody will notice very fast when using AI models. They require context, they won’t ask for more information but push their answer out on probabilities, they produce output that we term as “hallucinations”. If you haven’t made this experience so far, try to ask chatGPT about something where you have expertise knowledge about and see how unsatisfying their answer will be.

What we need to become aware of that AI doesn’t have a continuous input of data, especially LLMs only perceive what we provide as input, what we write, but nothing else. AI doesn’t perceive reality because it doesn’t have access to it, it can’t perceive how we write, or may sound in our voice, if we are writing in an angry, annoyed or happy way. It doesn’t know whether we are a working at a bakery or as politician. It only has this one limited dimension available as input and way of output.

We basically have created a machine that can perceive text, process it based on its training data and produce text that sounds reasonable but it doesn’t have all the associations that language was born of. Like when use the word ‘dog’ we associate it with images, with smells, with movements in our visual imagination, with how they sound, how they express their needs or how they may even attacked us.

We associate words with a whole body experience. And that’s something that a machine doesn’t have.

Is AI intelligent?

Well, coming to the final question. Is AI intelligent or not? Well, based on our definition from the beginning, yeah sure, or? It can perceive language, detect patterns in it, predict and interpolate information (like knowing which word you meant, even if you write it wrong), adapt to changes and it can express a response.

The question is, is it an expression of the self? This would require a definition of ‘self’ and for some people it may even requires a definition of ‘consciousness’. However, that’s a question I don’t want to answer in this post.

I think the more important part to realise is that how much our language actually is interwoven with our whole body perception of reality and how much language becomes random and kind of useless when these associations are not known to the one that expresses through this communication channel and abstraction layer.

An additional side-thought I have is that a self possesses the ability of continuously processing information and to interact with its outside world, even if not prompted, and that for sure isn’t the case for AI right now. And that’s why it is still more a machine that can mimic ‘intelligence’ rather than really having it.

Last Conclusion

So, if somebody says that someone or something is smart or dumb, it is actually much more an revelation of what they can perceive, process and self-express. Like, it is always a comparison of themselves with other things in their perceived reality. It has nothing to do with whether someone else is actually ‘intelligent’ or not and in what dimension. When someone can’t understand someone else they may assume the other one is dumb or smart. Dumb because the other one may doesn’t speak the same language, smart because they use ‘big’ words that are unknown to the observer.

What does this mean for us in the end?

When we perceive others, we may want to check whether we project our assumptions onto them or whether they project their assumptions on whatever topic is discussed in this moment. Are you having the same understanding of what you communicate? Is what they express really meant towards you or is it an attempt to express their own default thoughts or current inner status? Like … are they self-aware in this moment or are they running a program that executes learned default actions?

Again, this is not to judge anyone. It is to recognise reality for what it is. Always relative and context dependent.

This post doesn’t explain the world. It doesn’t take into account attributes of the world and explains the logic around it, the patterns. This post . . .

This post is a continuation of “Faith and what it means in my world view”. It breaks faith up into sets of beliefs that in . . .

Originally I wrote this piece in the mid of 2019. It is kind of the foundation for all my thinking in the further posts. (And . . .

Hm… how do I explain this topic in a simple and efficient way without getting lost in multiple rabbit holes and becoming emotional on the . . .

This is a guideline to give you something into your hands to become better at identifying your emotions. This guideline can’t and will never be . . .